Zoë Schiffer: Oh, wow.

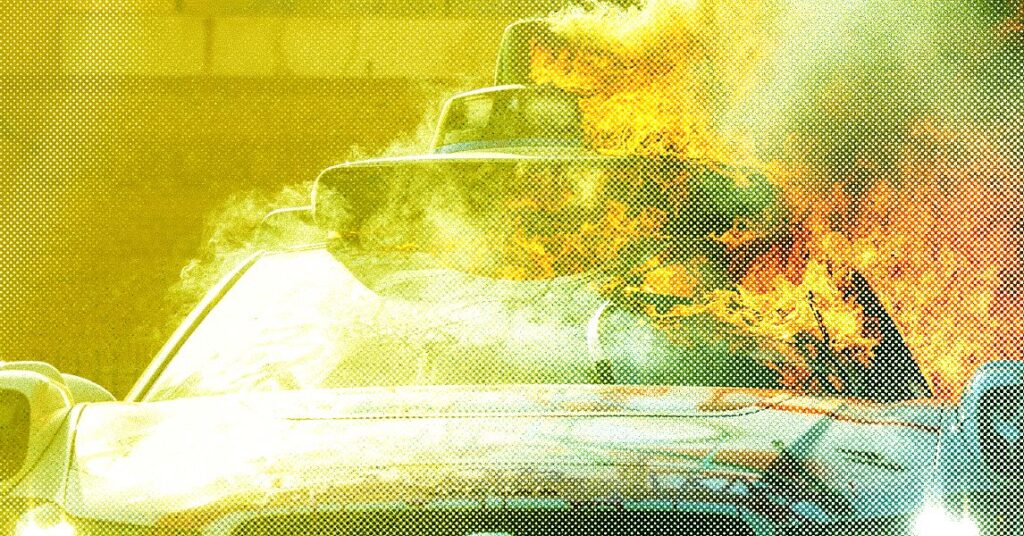

Leah Feiger: Yeah, precisely. Who has Trump’s ear already. This grew to become widespread. And so individuals went to X’s Grok they usually have been like, “Grok, what is that this?” And what did Grok inform them? No, no. Grok stated these weren’t really pictures from the protest in LA. It stated they have been from Afghanistan.

Zoë Schiffer: Oh. Grok, no.

Leah Feiger: They have been like, “There is not any credible help. That is misattribution.” It was actually unhealthy. It was actually, actually unhealthy. After which there was one other scenario the place one other couple of individuals have been sharing these photographs with ChatGPT, and ChatGPT was additionally like, “Yep, that is Afghanistan. This is not correct, et cetera, et cetera.” It is not nice.

Zoë Schiffer: I imply, do not get me began on this second coming after a number of these platforms have systematically dismantled their fact-checking packages, have determined to purposefully let via much more content material. And then you definitely add chatbots into the combination who, for all of their makes use of, and I do suppose they are often actually helpful, they’re extremely assured. Once they do hallucinate, after they do mess up, they do it in a manner that may be very convincing. You’ll not see me out right here defending Google Search. Absolute trash, nightmare, however it’s somewhat extra clear when that is going astray, while you’re on some random, uncredible weblog than when Grok tells you with full confidence that you just’re seeing a photograph of Afghanistan while you’re not.

Leah Feiger: It is actually regarding. I imply, it is hallucinating. It is totally hallucinating, however with the swagger of the drunkest frat boy that you’ve got ever sadly been cornered by at a celebration in your life.

Zoë Schiffer: Nightmare. Nightmare. Yeah.

Leah Feiger: They’re like “No, no, no. I’m certain. I’ve by no means been extra certain in my life.”

Zoë Schiffer: Completely. I imply, OK, so why do chatbots give these incorrect solutions with such confidence? Why aren’t we seeing them simply say, “Effectively, I do not know, so perhaps you must test elsewhere. Listed below are just a few credible locations to go search for that reply and that info.”

Leah Feiger: As a result of they do not try this. They do not admit that they do not know, which is actually wild to me. There’s really been a number of research about this, and in a current research of AI search instruments on the Tow Middle for Digital Journalism at Columbia College, it discovered that chatbots have been “usually unhealthy at declining to reply questions they could not reply precisely. Providing as an alternative incorrect or speculative solutions.” Actually, actually, actually wild, particularly when you think about the very fact that there have been so many articles throughout the election about, “Oh no, sorry, I am ChatGPT and I am unable to weigh in on politics.” You are like, effectively, you are weighing in on rather a lot now.

Zoë Schiffer: OK, I believe we should always pause there on that very horrifying be aware and we’ll be proper again.

[break]

Zoë Schiffer: Welcome again to Uncanny Valley. I am joined at the moment by Leah Feiger, senior politics editor at WIRED. OK, so past simply attempting to confirm info and photographs, there’ve additionally been a bunch of experiences about deceptive AI-generated movies. There was a TikTok account that began importing movies of an alleged Nationwide Guard soldier named Bob who’d been deployed to the LA protests, and you possibly can see him saying false and inflammatory issues like like the truth that the protesters are “chucking in balloons filled with oil,” and one of many movies had near 1,000,000 views. So I do not know, it appears like individuals must turn into somewhat more proficient at figuring out this sort of faux footage, however it’s laborious in an atmosphere that’s inherently contextless like a submit on X or a video on TikTok.